Read: Key points in Module 1

Understanding Artificial Intelligence: A Comprehensive Overview

This document provides a comprehensive overview of Artificial Intelligence (AI), differentiating it from related concepts like Machine Learning (ML) and Deep Learning (DL). It explores the growing importance of AI in today’s world and addresses common myths and misconceptions surrounding this transformative technology.

What is AI?

Artificial Intelligence (AI) is a broad field of computer science focused on creating machines that can perform tasks that typically require human intelligence. These tasks include:

-

Learning: Acquiring information and rules for using the information.

-

Reasoning: Using rules to reach conclusions (either definite or probabilistic).

-

Problem-solving: Formulating problems, generating solutions, and evaluating them.

-

Perception: Acquiring information about the environment through sensors (e.g., vision, sound).

-

Language understanding: Understanding and generating human language.

AI aims to replicate or simulate human cognitive functions, enabling machines to perceive, learn, reason, and act autonomously. It encompasses a wide range of approaches, from rule-based systems to complex neural networks.

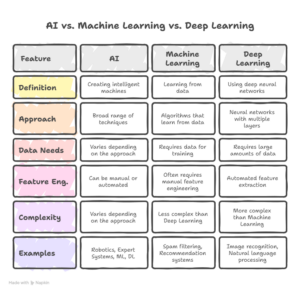

AI vs. Machine Learning vs. Deep Learning

It’s crucial to understand the relationship between AI, Machine Learning (ML), and Deep Learning (DL). They are often used interchangeably, but they represent different levels of specificity:

-

AI (Artificial Intelligence): The overarching concept of creating intelligent machines. It’s the broadest category.

-

ML (Machine Learning): A subset of AI that focuses on enabling machines to learn from data without explicit programming. Instead of being explicitly programmed with rules, ML algorithms learn patterns and relationships from data to make predictions or decisions.

-

DL (Deep Learning): A subset of ML that uses artificial neural networks with multiple layers (hence “deep”) to analyze data. These deep neural networks can learn complex patterns and representations from large amounts of data, often outperforming traditional ML algorithms in tasks like image recognition, natural language processing, and speech recognition.

Think of it as a set of nested circles: AI is the largest circle, containing ML, which in turn contains DL. All Deep Learning is Machine Learning, and all Machine Learning is Artificial Intelligence, but the reverse is not true.

Why AI Matters Today

AI is rapidly transforming various aspects of our lives and industries. Its importance stems from its potential to:

-

Automate tasks: AI can automate repetitive and mundane tasks, freeing up human workers to focus on more creative and strategic activities.

-

Improve efficiency: AI-powered systems can optimize processes, reduce errors, and improve overall efficiency in various industries.

-

Enhance decision-making: AI can analyze vast amounts of data to identify patterns and insights that humans might miss, leading to better-informed decisions.

-

Personalize experiences: AI can personalize products, services, and experiences based on individual preferences and needs.

-

Solve complex problems: AI can be used to tackle complex problems in areas such as healthcare, climate change, and cybersecurity.

Specific examples of AI’s impact:

-

Healthcare: AI is used for disease diagnosis, drug discovery, personalized medicine, and robotic surgery.

-

Finance: AI is used for fraud detection, risk management, algorithmic trading, and customer service.

-

Manufacturing: AI is used for predictive maintenance, quality control, and supply chain optimization.

-

Transportation: AI is used for self-driving cars, traffic management, and logistics optimization.

-

Retail: AI is used for personalized recommendations, inventory management, and customer service.

The increasing availability of data, advancements in computing power, and breakthroughs in algorithms have fueled the rapid growth of AI in recent years. As AI technology continues to evolve, its impact on society and the economy will only become more profound.

Myths & Misconceptions

Despite its growing prominence, AI is often surrounded by myths and misconceptions:

-

Myth: AI will take over the world. This is a common trope in science fiction, but it’s not a realistic concern in the foreseeable future. Current AI systems are designed for specific tasks and lack the general intelligence and consciousness necessary to pose an existential threat.

-

Myth: AI will replace all human jobs. While AI will automate some jobs, it will also create new jobs and augment existing ones. The focus should be on adapting to the changing job market and developing skills that complement AI.

-

Myth: AI is always objective and unbiased. AI algorithms are trained on data, and if that data reflects existing biases, the AI system will perpetuate those biases. It’s crucial to address bias in data and algorithms to ensure fairness and equity.

-

Myth: AI is magic. AI is not magic; it’s a complex technology that relies on mathematical and statistical principles. Understanding the underlying principles of AI is essential for developing and deploying it responsibly.

-

Myth: AI is only for tech companies. AI is relevant to all industries and organizations, regardless of size or sector. Any organization that generates or uses data can benefit from AI.

By dispelling these myths and misconceptions, we can foster a more informed and realistic understanding of AI’s potential and limitations. This will enable us to harness its power for good and mitigate its potential risks.